This is part of a series on what we might need to rethink about our own capabilities, to do right by the Artificial Intelligence revolution. You can read the previous piece by pointing your most-clicky-finger right here

Artificial Intelligence—as we now recognise it—started from the idea of a neural network. That is, an attempt to simulate how human brains learn, by connecting ‘neurons’ containing one piece of information to others.

For example, “red” + “yellow” + “bread” + “triangle” might be a pizza slice.

This simple principle allows us to identify a pizza slice on a footpath, or in a swimming pool, just as easily as on a plate in a restaurant.

Of course, while we understand the basic principles, it’s fair to say we still don’t have the full picture: How do experiences, senses, circumstances, emotions, genetics, age, space radiation, and so on contribute? We’re still working on that stuff in our broader attempt to grasp how things like consciousness, creativity, and our sense of purpose (beyond rote reproduction!) actually power the human experience.

There’s plenty to unravel among those mysteries but, for AI, there’s been a bigger problem we needed to deal with first.

It perhaps seems fairly straightforward to train a machine to recognise a slice of pizza, assuming all that’s required is the recognition of a few key attributes. Indeed, it might have been something we could have expected it to do fairly convincingly some time ago. And, by now, we certainly shouldn’t still be having to solve those silly CAPTCHA things to help train self-driving cars to recognise the difference between traffic lights and a bicycle, right?

In a sterile, highly-controlled environment it probably actually isn’t that hard. However, we’d also not be that impressed by a machine that could identify a pizza slice, vs. a hamburger or a hot dog, on a white table in a white room. Before we go bandying about words like “intelligent” for a machine like that, we’d want it to at least be able to function in an approximation of the real world!

But here is where this sort of thing is deceptively complex. Because you first need to remember that the most basic of these systems requires both the collection of information, and the reinterpreting of it for delivery. Obviously, just cataloguing information is worthless unless it can do something useful with it; and, likewise, a fancy interface would never be considered intelligent if it could do was spit out gibberish because it hadn’t adequately collected and sorted anything useful.

Kind-of an aside, but I expect this is why we often think of non-human species as less intelligent. It’s clear that many species are far more capable, communal, and resourceful than us in a whole load of respects. However, because they can’t tell us about it, they must just be ‘stupid’ I guess?

Anyway, that might all seem self-evident, but it’s an important position to appreciate, because if you think about how we thought we’d make Artificial Intelligence work—by sucking up data and connecting all the bits of it to all the other relevant bits, like our neurons—it was never really going to work. You’d end up with impossibly-large and interwoven databases, such that you’d need a planet-sized computer to do anything useful. And, even then, what you got out of it might not mean much, for us (spoiler alert).

Let me put the challenge into perspective for you. Think about how much information you can suck in, in a given moment; and therefore how much stuff your brain needs to ignore so that you don’t go absolutely insane:

Right now, I’m sitting on a bed, typing on a hot computer with a loud fan and a large, bright screen full of icons and notifications, on my lap. I’m trying to write this with a pair of nephews fighting down the hall, and I can look over the top frame of the screen to see out a window where leaves are dropping from a tree. There is the smell of coffee, a slight breeze from the heatpump, and a niggling concern that I should go and help start dinner. I have a slightly sore knee from a badly-landed jump between some rocks earlier in the day, and I’m wearing shorts so the skin on my crossed legs occasionally sticks. I can feel the wall at my back, the laptop keys as they bottom out on the ends of my fingers, the material of my clothes… You get the picture. That is a fraction of the thoughts and senses my brain is processing, in this one moment. How could we possibly do anything if our brains were unable to focus and dismiss the vast majority of that input?

So, building intelligence via simply ‘connecting everything’ turns out to be harder than it sounds, because the data is not grounded to anything. If I gave equal weight to all the information I’m being exposed to right now, I couldn’t possibly think… Already that coffee smell is becoming an increasing distraction for my brain!

Let’s loop back to the pizza slice example. “Yellow”, “red”, and “bread” could just as easily describe aspects of the hot dog or the hamburger, so part of processing the data requires an understanding that the “triangle” shape needs to be given a higher weighting.

This (heavily-simplified) example, of determining what things need to be important, for an AI model to operate effectively, is called “Attention”, and it is one key to making modern AI tools appear so much more ‘clever’. In AI circles, attention gets incorporated along with the data through a technology called a Transformer.

I know it feels like I’m taking you down a rabbit hole here. It’s all to make you question the difference between “intelligence” and the “appearance of intelligence”.

A while back, I wrote about predictive text messaging and the precursors to modern AI.

My original Nokia phone was a long way from the capabilities of something like ChatGPT, but our general expectations of it were not so very different: Predicting the extremely-near future, whether that was the next word…or next image pixel… or next movement…or next scientific discovery.

You might think that ‘predicting a word’ and ‘answering a complex query’ are quite different tasks, but both work by sequentially producing an answer rather than fully-forming it. In many AI tools, you can see this happening as your answer is “generated”: Consider the difference between how a pocket calculator, given a query, just gives you the answer; while ChatGPT produces it word-by-word—and if you interrupt it to change a single word, and it will throw the answer off in another direction.

The “data” my Nokia phone was working with were the alphanumeric buttons I pressed on the phone keypad (after each ‘space’ character), and the output was a continuously-revised prediction of the word I was attempting to write.

What makes a Transformer AI much more powerful than this, is it can determine the context of a request by processing all of the parts of it in parallel, rather than one word at a time and, therefore, can understand where its attention should be focused.

In simple terms, it’s like if I type “Dear Bill” on my old Nokia phone and an animated paperclip popped up on the screen, saying “It looks like you’re trying to write a letter?”

That is a joke of course. But I mention it to demonstrate how apparent intelligence can have little to do with facts and figures, but rather the ability to divine a sort of ‘hidden purpose’ in any limited information at hand. Specifically, in that example, being attentive to the convention that any word immediately preceded by “Dear …” suggests a letter is being written, despite the raw data in “Bill” and “Dear” simply representing two, equal, 32-bit blocks of otherwise random data.

This, incidentally, is something the human brain—with all the myriad inputs bombing it, right from (and this is not an argument I’m going to get into here!) roughly 30-35 weeks after conception—does exceptionally well. We have a natural ability to capture millions of data inputs across time and type, and inherently filter in those which are important for our survival, goals and happiness.

I say, still writing from my sensorial spot on the bed.

So, as the Google-based engineers who worked out the practical implications of this, and effectively invented the modern Transformer approach, succinctly noted “Attention is all you need”. In practice, this is what allows a modern AI to be given a prompt like “Write a story about dogs in the style of Shakespeare”, and have it produce a block of text that not only makes sense from one word to the next, but has a narrative arc, involving a dog as the protagonist, and using iambic pentameter rhythmic metric until the very last word.

This might seem like a silly example because, even if you might find it hard to complete that specific task, it’s easy for humans to understand what things are important to produce something from that prompt. On the other hand, a simple neural network, even pumped full of the complete works of Shakespeare, The Dog Encyclopedia, as well as The Hero with a Thousand Faces, Save the Cat! and Steering the Craft, still couldn’t do it, because it couldn’t decrypt a consistent focus.

All that said, basically all the new hotness in AI uses these “Attention” fundamentals. And that includes what you’re likely accustomed to thinking of as ‘modern’ AI Large x Models (the “x” most commonly refers to “Language” right now, although sometimes it’s (robot) “Action”—regardless, those are unlikely to be the final variations to that acronym). In a typical example, a Large Language Model (LLM) is a pile of data, which is turned into billions of smaller tokens representing fragments of that data—a bit like our ‘yellow/red/bread/triangle’—and then a massive database gets built to connect identified patterns and attention. While this can be done in a small way for specific tasks, the diversity and large scale are a big factor in delivering the “wow” that these systems enable; it’s a bit like giving an AI more “experience”.

Like a friend who has travelled overseas more than you, and therefore has more interesting stories to tell at parties.

You’re undoubtedly also familiar with terms like “GPT” (Generative Pretrained Transformer) which essentially describes how these LxM’s make their connections. You can probably guess, these generate an output (eg. text), having been pre-trained with large amounts of data in a way that collects not just knowledge but also an understanding of how to focus on it. The innovation of GPT, and the reason why systems like ChatGPT are so impressively able to re-contextualize apparently disparate ideas, is that the AI model is largely given unorganised data to look at (for example, just chunks of the internet), but allowed to identify its own connections and priorities based on context and weighting—without substantive human direction.

As an aside, this process requires masses of computing power and energy.

Anyway, one other advantage of this ‘Transformer’ style of training AI models is you can essentially point it at any data that you can break into tokens—images, audio, video, x-ray scans, medical records, faces, weather patterns, physical movement, DNA… and let it determine how the data could be connected and prioritised. That has the obvious potential to disrupt things far beyond just the writing of Excel formulas or silly poems, or even creating AI audio and visual media. Which is why I’m tending to hedge my bets with the acronym “LxM” here.

So. Human Intelligence

Maybe that potential for disruption is freaking you out a bit. But, hopefully, you’re also wondering if there might be more to intelligence than the guys trying to sell you on the power of Artificial Intelligence are implying. We discussed that a little in the last piece:

As a fellow human (also, “hello bots crawling this page”), you probably do find yourself occasionally frustrated by your biological flaws. And you certainly appreciate a monstrous data centre is likely to be better at some things than you—like remembering names, or where you left your car keys.

But, it’s still worth taking a moment to recognise that it’s pretty freakin’ amazing what a single human brain can do, powered by some left-over fried chicken and a Mountain Dew!

I point that out just to remind you that memory alone, and even the re-configuring of memory fragments into new patterns and combinations, is clearly not all there is to human intelligence.

Those aspirations of building a digital neural network didn’t come from nothing.

In a fraction of a moment, we embed and associate information; such that something as insignificant as a subtle smell or the opening chord of a piece of music can surface clear feelings and memories in an instant. That is all fragments of data being tickled and retrieved. We may have some storage limits for precision data (like where we left the car keys), but we have an interesting other side to our intelligence, which is an extra layer, provided by ‘feelings’. We intuitively all understand that love, fear, disgust, or joy are a big part of making connections stick, and also, in my opinion, are likely a big part of how we achieve such effortless focusing and filtering. That ability is something we can’t know if AI will ever be able to replicate.

And that is really the underlying question bubbling through this whole series. How much are emotions integral to intelligence?

Earlier, I was talking about how both collecting data, and reinterpreting it for delivery were key to the appearance of intelligence. AI, with the sorts of technologies we’ve discussed, already has the potential to do those things pretty well. Indeed, there are many ‘tasks’ that the trajectory we’re on will guarantee AI does much better than humans. But there’s something else I think might be needed, to distinguish an ‘intelligent species’ from the ‘useful personal assistant’ that many people increasingly believe represents artificial intelligence, and that is motivation.

How much does the fear of death inspire our creativity? How much does jealousy or love or insecurity illuminate our philosophy? …Greed, our strategy? …Boredom, our risk-taking?

As a human, you don’t need to be consciously aware that these sensations are triggering your thinking, but what would you be without them? Our brains can collect, link, and reconfigure data; and our mouths, hands, and bodies can express it. But without any feelings, there would be no interest in acting on it, or concealing it, or contemplating it.

For the sake of our topic, you could think of these sorts of emotions like a kind of biological inductive bias.

…This is getting into the weeds a bit, but it’s interesting to wonder whether actual intelligence requires a reason to ‘think’. An AI can, of course, process information, but only in the context of observation and rules. So, “inductive bias” in AI is designed as a ‘nudge’ to help guide how the entity should respond to a query or information outside its previous experience.

At an extremely-simplified level, an inductive bias might allow a system to conclude that, because it knows “20+20=40” and “200+200=400”, then it’s fair to assume “2000+2000” will likely equal “4000”—even without it knowing how math works. But it’s not just recognising patterns, it’s a guide to help the AI to swing in the right direction when it’s thrown a curve ball: A way of framing that, if done right, allows it to address a near-limitless range of queries. And, when poorly implemented, can result in something like diversity being prioritised above historical reality.

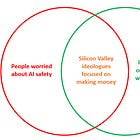

The reason I bring that all up is because this is one of the useful shortcuts required to make a tool appear more insightful and self-aware, in the absence of something like our biological feelings. And, it can genuinely help to sell the idea that these machines can ‘think’ and ‘make decisions’ because it allows them to reach conclusions even from new problems. However, I’d argue the design of inductive bias is closer to human “ideology” than actual intelligent thought—it is something that encourages us towards certain conclusions when faced with new information, but is also pretty fragile when pitted against real (or contradictory) emotions like fear, jealousy, or love.

It seems to me another example of us underestimating the scope of our intelligence.

The point is, a cleverly-manufactured trick like inductive bias can cause a machine to make “choices” and even possess what looks like “ethics”, but not with the same precedence as, say, our fear of death. And, without that, is it fair to wonder if AI could ever really credibly think for itself? An emotion like ‘fear’ is what propels us to achieve and be remembered, so requires us to be able to think for ourselves (even if much of our time in modern life rewards us for not using this ability). Without factoring that into our definition of intelligence, I’m just not sure if we can understand what the implications of ethics-by-algorithm actually might be.

The aspiration for many in the AI sector is to build an Artificial General Intelligence (AGI) that can adapt to perform a wide range of tasks without needing specific training, just as a human brain can. I can’t say for certain that an AI will never know ‘love’ or ‘fear’, in that context, but it’s worth considering why it would need to. And, to draw an obvious corollary, it’s worth questioning if, to be truly an intelligent “equal”, should these things need to feel fear? Need to feel love? Should it need some non-rational drive to periodically divert it away from problem-solving and into things like creating art just for the sake of it? (if, indeed, art is a sign of intelligence)

If a machine draws a picture, how does it know to feel pride in its work?

In spite of all Hollywood’s imaginings about “genuine-feelings” robots, I just don't know why an engineer would/could ever build that into a model. I don't know why a model would evolve emotions into itself.

To what end would ‘limitations’ like that exist, for a species that is inherently immortal and has no need to organically reproduce?

We still don't fully understand the brains and decision-making processes of even our fellow symbionts—and they share our capacity for fear, pain and guilt. Meantime, we’re emptying our minds and collected history into these new things (via the convenient mechanics of the internet). But we’re not just doing it, we’re then busting to call it intelligent, without really questioning what intelligence requires.

For any developers out there who would hope to create a true sentient AI (for whatever reason you might feel compelled to do that!), might I suggest you simply program an unassailable mortality into your model?

Maybe only in that truncated window could a sufficiently-powerful artificial application evolve into artificial intelligence?

Why does any of this matter? If it walks like intelligence and quacks like intelligence, is it not near-enough to be called intelligence? AI has the potential to be smarter, faster, and more aggressive than us, plus the potential to be self-reinforcing; it’s not so far from being able to independently trade financial instruments, place orders for components, design and build superior versions of itself, all at an exponentially faster rate than any human technological adoption. It doesn’t matter that we don’t have a complete picture of what intelligence is, if we don’t ask these questions, these tools will make enough of us believe they are intelligent and submit to their superiority. But that means submitting to an entity with knowledge, logic, and task-orientation, but without the protection of limitations.

That doesn’t mean we have to stop everything and miss out on progress. If we just demand intelligence should be holistically measured and not just subjectively observed, we can still use ‘AI’ as an amazing tool for supplementing our abilities. I’m just not sure we should be rushing to endow it with more status than that in our collective zeitgeist.

But what if we do? Next time…

-T