Hello readers. Many of you weren’t on my list back when I wrote my previous series on AI. While I tend to spread themes across multiple posts on here, I do try and write each piece to stand alone as well.

Still, if you get to the end and find yourself wanting more of my flavour of thinking about it all, I’d encourage you to whip back and take a look. Or subscribe. There’s more to come…

There’s a thing you’ll have noticed happening, which is a tiny handful of people are pushing ahead with a self-actualising agenda that will clearly impact the lives of everyone else for years to come; and most of us just have to helplessly stand by and have it done to us.

Of course, technological revolutions do tend to happen a bit like that but, for most of our history, it’s just been hard to watch it play in real time.

I’m not really suggesting this is objectively bad; it’s how these things work. Although, I’m actually not a believer in the idea of once-in-a-generation individuals who are born with some genius world-changing abilities; but rather in individuals who just happen to be the right general personality, swimming in the right waters as humanity as a whole shunts forward on the back of collective knowledge and cultural readiness. So, I’m perhaps just a little less willing to concede our autonomy to these individuals as some of their disciples might be.

Anyway, if there’s anything different about this particular morphing-of-our-world, via this Artificial Intelligence (AI) ‘revolution’, compared to, say, the development of printing, electricity harnessing, nuclear energy, fire, stone tools, and so on, it’s that it is now driven by an intentional hype machine, and not just organic discovery.

So, in my previous series about AI, I was writing about what this technology might mean, practically, for humanity. But, I’ve also been thinking about what the way we understand this AI era tells us about ourselves.

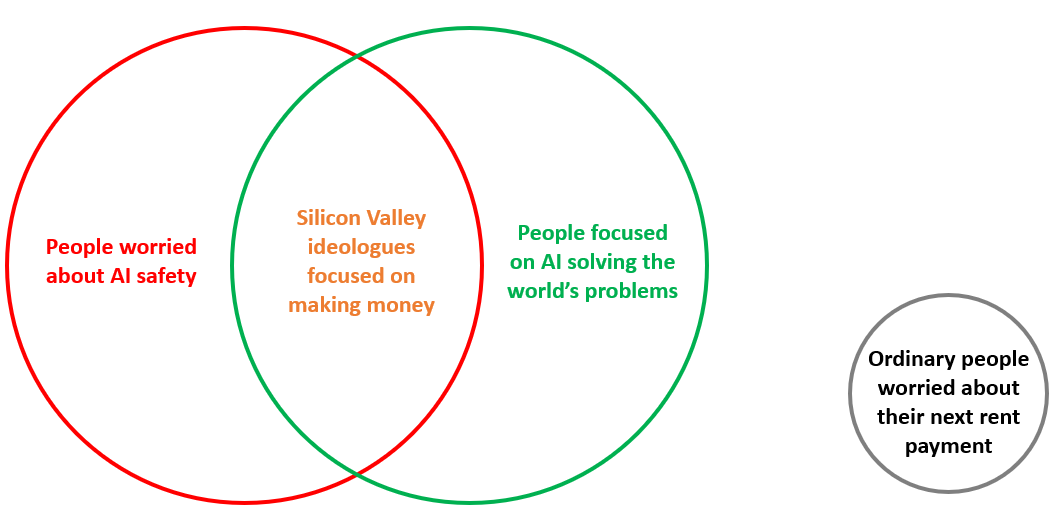

It feels to me like there are three main attitudes about “AI”—as a general concept—right now:

If you’re thinking about AI, you’re basically thinking about it as some degree of universal good or universal threat. And, it is at the intersection of those attitudes where there are people who believe they can manage any potential threat: The people making money out of it.

There is, of course, another group of people who don’t have the mental energy to have an opinion about it either way. But this is why the inclusion of a hype machine threading through this particular revolution makes this one a bit different from previous species-level technological adjustments.

You’ve probably come across P.T Barnam’s concise principle “There’s no such thing as bad publicity”; albeit simplistic, but I do think it applies especially well here. If those in the money-making-middle can just migrate a few more of the people from the grey circle into one of the green or red ones—which they do essentially by making people believe that AI is (or could, one day, be) “equivalent to a human in its capabilities”—then they can make money from simply promising to be able to handle the threat.

And to be clear, despite all the posturing about it, we genuinely still don’t know if we can build (or evolve) a true Artificial General Intelligence (AGI) to rival the range of human capability (not that, as you’ll see, measuring it against human capability may even matter). Even with the impressive progress we’ve made on AI tricks—like conversational text generation, image, audio, video, and research—in just the past couple of years, there’s all manner of potential walls we are yet to hit, as AI innovation collides with increasing scale and increasingly regressive model training, energy use, regulation, ownership questions… Not to mention how access to this technology might cause social, geographic, and class division.

Importantly though, in the meantime, the goal requires us to grant AI all sorts of “human” rights, including the right to hoover up all the world’s knowledge without any financial recourse for its original creators; the right to operate freely in the marketplace of ideas; and, most importantly, the right to be regulated by people who ‘understand’ it.

This is the conflict though. We’re doing this without understanding human capabilities especially well.

Consider the simple idea of a “6th sense”. We’ve all experienced something akin to it: You’re driving and just know that guy is going to pull out of an intersection on you, even though their car hasn’t moved; or you sense something might be wrong with a close family member on the other side of the world; or you’re in a bar and, with barely a glance, you immediately can tell someone is about to crudely hit on you, before they’ve so much as opened their mouth; or you have one of those lightbulb moments where you understand a thing in a way that seemingly no-one else has before.

You know, stuff we might call “gut feelings”.

I’m not making the case here for some kind of mysticism, but there’s no human on earth who hasn’t experienced some unexplainable and undocumentable super-humanity that goes beyond our standard, recognisable, senses and knowledge. It remains just a bit mysterious.

The thing is, not even science—the thing attempting to build these Artificial Intelligences we hope to solve our problems and deliver us luxurious full-time recreation—has a grasp of this stuff. And, maybe it never will.

Because, how would you even study these discrete super-humanity moments? Maybe these things might be explained by unseen or subconscious interactions between multiple known senses? Or, maybe there are physical or spiritual factors we just haven’t discovered yet that will explain it? Or, maybe it simply won’t matter anyway, if we get the technology balance just right?

Regardless, our ongoing cultural fascination with magic, and with these ‘senses’ beyond what we can quantify—centuries after The Enlightenment made it quite clear that “science” was the only rational way to understanding the world—should give us pause about how much certainty we should be taking into our (contemporary-knowledge-enabled) creation of a new “intelligent” species sans whatever “limits” our species has evolved for survival.

For all the anthropocentric damage and destruction we’ve done, we've always loved the idea of a species living alongside us that is our equal. We’ve spent all of recorded history imagining gods and aliens and anthropomorphised animals, to give us coeval company on this planet.

Is it any surprise that we're drawn to AI?

But, despite all that invested imagination and thought, I’m not certain we understand what a species that is an intellectual ‘equal’ (or ‘better’) of us even is.

And, it starts with not really understanding what we are all about.

See, humans take a lot of shortcuts to optimise our brain’s processing power, so we very often revert to an apparent “autopilot” state of mindlessly [sic] following patterns or rules. The result is, that what looks like intelligence can actually just be a ‘vacant being’ following carefully designed rules and patterns.

An example might be a highway full of autonomous cars, each operating from just two simple rules:

Stay on the road

and

Don’t collide with other vehicles

Just those basic rules are all that’s required for some remarkably ‘intelligent’ maneuvering, dodging and ducking, patience, and even innovation. Cars, given those rules (and appropriate input sensors to allow them to interact with their surroundings) will change lanes, redirect, and obstruct each other, all without needing to do any real ‘thinking’ about it.

Of course, driving-in-practice always appears far more complex, but that’s because its seeming complexity is belied by the integration of our human senses, experience, and insights working together in concert (our “input sensors”) to essentially just adhere to those two, same, basic rules.

Further, what looks like intelligence can just as equally be a series of ever more precise guesses. If you perfectly observe a coin toss enough times, you could get very good at guessing the outcome based on spin speed, height, and other variables. The human sensory system can’t do this, but a machine potentially could. An ability like that—able to correctly predict a coin toss!—would appear super-intelligent. But is it? Or just another example of rule-following, just one step removed such that the system is devising its ‘own’ (black box1) rules for determining when a Head or Tail might surface?

Indeed, it's not even especially hard to appear supremely intelligent using some combination of a little observation and confidence. Case-in-point, the entire, multi-trillion-dollar, global finance sector is built of people we laud as “brilliant intellectuals”, but who largely are just observant and confident enough to bet correctly at least 51% of the time.

That example also highlights one other thing that can look like intelligence, but arguably isn’t, and happens when you're dealing with very large samples. Like the finance sector, the insurance industry is built on the idea that collecting and manipulating basic data, but at gigantic scale, gives you a quite magical ability—apparent super intelligence you might say—to predict outcomes with spooky accuracy: When you’re likely to die, of what cause, and how many claims you’ll have made on your 2006 Honda sedan between now and then.

That, incidentally, is how we know for certain that climate change is a real thing. Because insurance companies are looking at their giant data hauls and freaking out. When it comes to the truth about climate change, we should consider the need for insurance companies to keep their shareholders happy as its own kind of emergent super-intelligence about the topic!

Anyway, my point is, that if this sort of thing represents the nature of our present understanding of intelligence, let's not overestimate our grasp of it.

That’s enough for now… to be continued.

-T

In AI (and science more broadly), a ‘black box’ describes a system where you can observe the input and outputs without having an understanding of its inner workings. In other words, the (potentially risky) state of not knowing what actual variables the system uses to reach conclusions.

Love this. Look forward to the next installment

History tells us that most of the people who earned the a reliable income during the gold rush were the ones selling shovels. And the shovel salesmen are out in force these days. For those who can't afford to buy a shovel, they'll rent you one for twenty bucks a month.

On April 7, when daylight saving ended and we turned our clocks back an hour, I asked Microsoft's Copilot for the current time (https://tinyurl.com/WhatsTheTimeInNZ). If AI can't tell us the time, I'm not sure it can tell us how to deal with climate change.